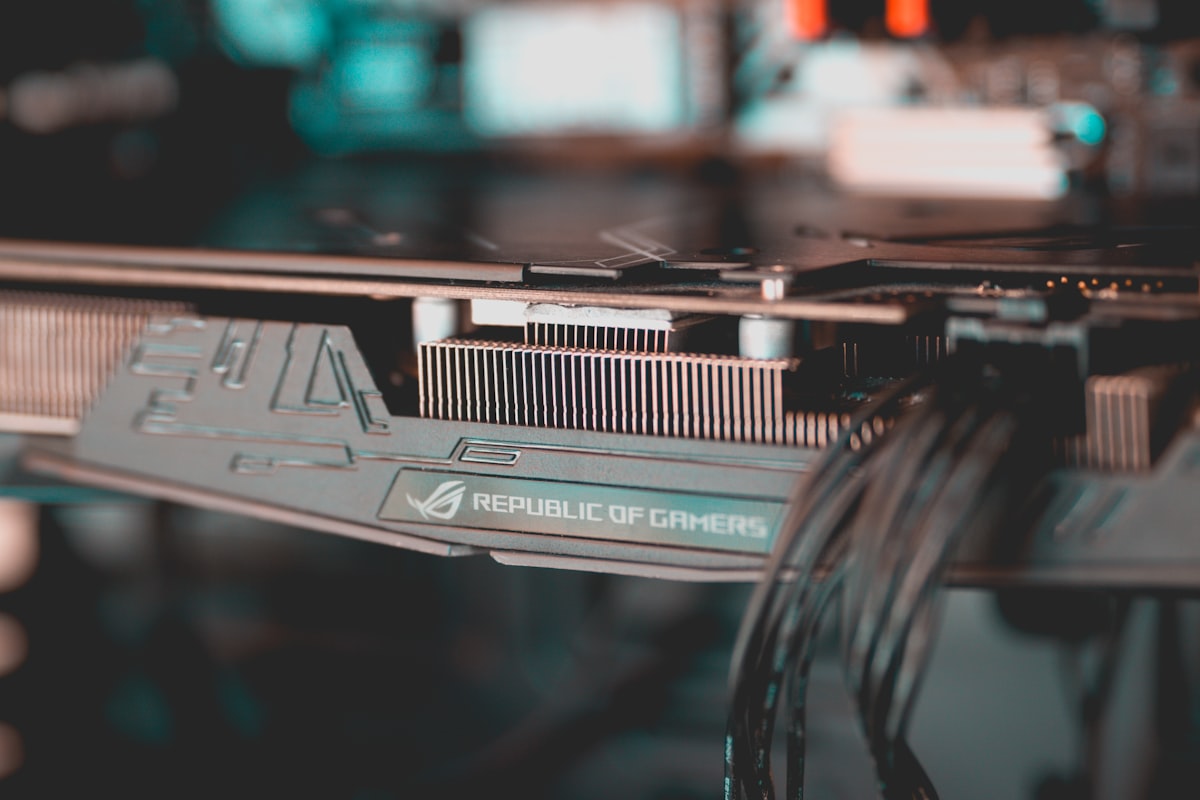

Enable GPU access to WSL2 debian for your AI workloads

This post aims to centralize the information on how to use your Nvidia GPUs on debian using WSL2 in order to train and run your AI/ML models.

This post aims to centralize the information on how to use your Nvidia GPUs on debian using WSL2 in order to train and run your AI/ML models. If you use ubuntu there is a nice guide here.

Requirements:

- WSL2

- debian 11

- Windows 10 version > 21H2 (check out in: About -> Version)

- Nvidia GPU

- GPU drivers installed on Windows (find your own)

Update WSL2

Source: Run Linux GUI apps on the Windows Subsystem for Linux (Microsoft docs)

- Open PowerShell as admin

wsl --update- Restart

wsl --shutdown

Install the custom CUDA drivers

Source: CUDA toolkit documentation (Nvidia docs), Installer for debian

We are going to install custom cuda drivers instead of the ones provided by the usual packages. This is needed for WSL2 compatibility.

# Remove old driver keys

sudo apt-key del 7fa2af80

sudo apt install -y software-properties-common

wget https://developer.download.nvidia.com/compute/cuda/repos/debian11/x86_64/cuda-keyring_1.0-1_all.deb

sudo dpkg -i cuda-keyring_1.0-1_all.deb

sudo add-apt-repository contrib

sudo apt update

sudo apt -y install cudaTest it

git clone https://github.com/nvidia/cuda-samples

cd ~/Dev/cuda-samples/Samples/1_Utilities/deviceQuery

make

./deviceQuery

rm -r cuda-samplesYou should see something like this

./deviceQuery Starting...

CUDA Device Query (Runtime API) version (CUDART static linking)

Detected 1 CUDA Capable device(s)

Device 0: "NVIDIA GeForce RTX 3090"

CUDA Driver Version / Runtime Version 11.7 / 11.7

CUDA Capability Major/Minor version number: 8.6

Total amount of global memory: 24576 MBytes (25769279488 bytes)

(082) Multiprocessors, (128) CUDA Cores/MP: 10496 CUDA Cores

GPU Max Clock rate: 1695 MHz (1.70 GHz)

Memory Clock rate: 9751 Mhz

Memory Bus Width: 384-bit

L2 Cache Size: 6291456 bytes

Maximum Texture Dimension Size (x,y,z) 1D=(131072), 2D=(131072, 65536), 3D=(16384, 16384, 16384)

Maximum Layered 1D Texture Size, (num) layers 1D=(32768), 2048 layers

Maximum Layered 2D Texture Size, (num) layers 2D=(32768, 32768), 2048 layers

Total amount of constant memory: 65536 bytes

Total amount of shared memory per block: 49152 bytes

Total shared memory per multiprocessor: 102400 bytes

Total number of registers available per block: 65536

Warp size: 32

Maximum number of threads per multiprocessor: 1536

Maximum number of threads per block: 1024

Max dimension size of a thread block (x,y,z): (1024, 1024, 64)

Max dimension size of a grid size (x,y,z): (2147483647, 65535, 65535)

Maximum memory pitch: 2147483647 bytes

Texture alignment: 512 bytes

Concurrent copy and kernel execution: Yes with 6 copy engine(s)

Run time limit on kernels: Yes

Integrated GPU sharing Host Memory: No

Support host page-locked memory mapping: Yes

Alignment requirement for Surfaces: Yes

Device has ECC support: Disabled

Device supports Unified Addressing (UVA): Yes

Device supports Managed Memory: Yes

Device supports Compute Preemption: Yes

Supports Cooperative Kernel Launch: Yes

Supports MultiDevice Co-op Kernel Launch: No

Device PCI Domain ID / Bus ID / location ID: 0 / 9 / 0

Compute Mode:

< Default (multiple host threads can use ::cudaSetDevice() with device simultaneously) >

deviceQuery, CUDA Driver = CUDART, CUDA Driver Version = 11.7, CUDA Runtime Version = 11.7, NumDevs = 1

Result = PASSThat should be it!

Extra: tensorflow

If you have tensorflow installed you can also check it running:

import tensorflow as tf

tf.config.list_physical_devices('GPU')If you see a list like [PhysicalDevice(name='/physical_device:GPU:0', device_type='GPU')], then everything works correctly.

INFO: You might need to install tensorflow via conda as explained in the documentation.

![Leptos: rust full stack [Code + Slides + Video]](/content/images/size/w600/2025/12/leptos-talk.png)